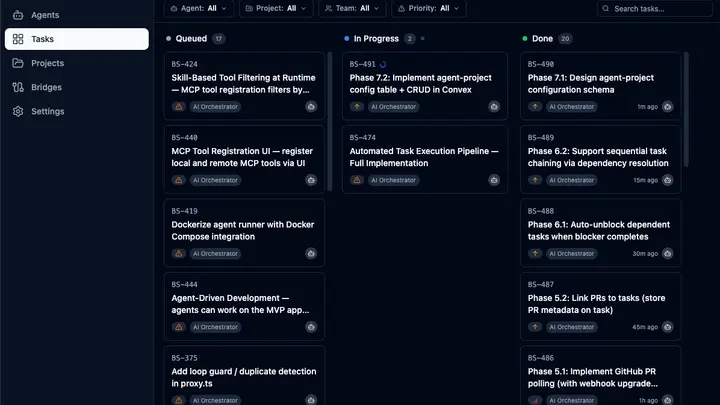

This is a screenshot from tonight. We're building NRNS with NRNS — the agent runner is working through our own backlog while we write this post.

What you're looking at: 17 tasks queued, 2 in progress, 20 completed. Every card tagged "AI Orchestrator" was picked up, worked on, and finished by an agent. The timestamps on the right — 1 minute ago, 15 minutes ago, 30 minutes ago — that's real. The agent has been steadily churning through the backlog.

What the agent is building

If you read the task titles, you'll notice something: the agent is building its own execution pipeline. Phase 4 through Phase 7 of the automated task system — PR linking, GitHub polling, sequential task chaining, dependency resolution, auto-unblocking, and agent-project configuration.

It's building the infrastructure that makes it better at building things. There's something recursive about watching an AI agent implement "auto-unblock dependent tasks when blocker completes" — a feature that will make its own future task execution smoother.

How the board works

The task board is a kanban view. Queued tasks are waiting to be picked up. When the orchestrator is ready for the next task, it pulls from the queue based on priority and dependencies. Tasks move to In Progress while the agent works — writing code, running tests, opening PRs. When verification passes, they move to Done.

The yellow warning icons on queued tasks mean they're blocked — waiting on another task to finish first. The green arrows on done tasks mean they completed successfully. The blue spinner on in-progress tasks means the agent is actively working.

Notice the sequential pattern in the Done column: Phase 3.3, then 4.1, then 5.1, then 5.2, then 6.1, then 6.2, then 7.1. The agent is working through phases in order, respecting dependencies. It can't start "Link PRs to tasks" before "Implement GitHub PR polling" is done. The system enforces this automatically.

What happens behind each card

Each task card is a full development cycle. The agent reads the task description, explores the codebase to understand context, writes the implementation in an isolated git worktree, runs type-checks and linting, and opens a PR. If checks fail, it fixes them before marking the task done.

"Phase 7.1: Design agent-project configuration schema" — the one in progress right now — means the agent is reading our existing schema, understanding the data model, designing a new table structure, writing it, and making sure it integrates with the rest of the system. That's not autocomplete. That's actual engineering work.

The sidebar tells the story too

Look at the left side: Agents, Tasks, Projects, Bridges, Settings. This isn't a code editor with an AI sidebar. It's a workspace where agents and humans share the same tools. The task board doesn't have a special "AI tasks" section — agent tasks and human tasks live in the same view, same filters, same workflow.

That's the whole idea. Agents aren't a separate system you check on. They're working the same board you are.

Still early

This is our internal build. Not everything works perfectly — some tasks in that queue will probably need human intervention when the agent hits something it can't figure out. But 20 completed tasks in a session, working through phased dependencies, with real PRs at the end? That's the thing working.

More sneak peeks coming as we get closer to early access. If you want to see this for yourself, the waitlist is open.